As a photographer, you usually want to capture a scene as accurately as possible. Especially when you are into product photography or art reproductions. But most camera sensors have some flaws and are therefor limited. A few cameras these days have implemented an old technique to get the maximum out of a sensor: pixel shifting. Let’s see how this performs in the recently launched Sony A7R III.

CONTENTS: Sensor designs with flaws | Old technology becomes hot again | Does it deliver? | How much more information? | Should you use this? | Why is this important?

Sensor designs with flaws

Camera sensors usually have two flaws in their designs: the ‘color filter array’ and the optical low-pass filter (OLPF) or anti-aliasing (AA) filter.

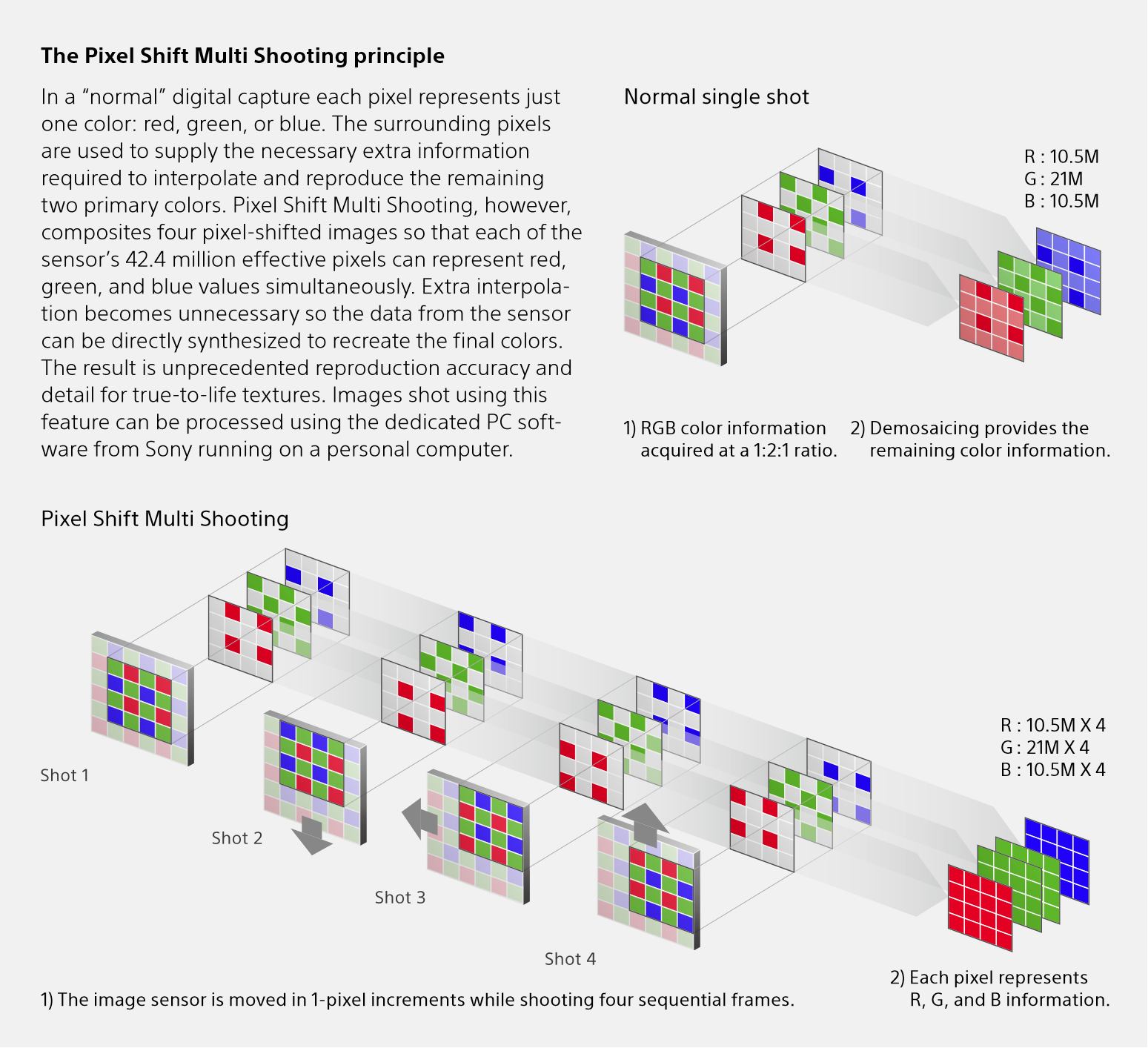

The first one is responsible for getting color information: each pixel will gather specific color information, either Red, Green or Blue (RGB). The layout of those RGB-pixels can differ, but the most used format is the Bayer-filter. When looking at a 2×2 pixel square, 1 pixel will be Red, one will be Blue and two will be Green.

Because the pixel only contains information from one of the three primary colors, software will be used to fill the gaps: demosaicing. This demosaicing software will calculate the missing color information based on the surrounding pixels. E.g. for the Red pixel, the Green and Blue will be a calculated value, based on the neighboring Green and Blue pixels. This calculation is either done in camera (if the images are captured as JPEGs), or in post-processing with a ‘RAW converter’ (e.g. Adobe Lightroom, DxO PhotoLab). So the color information in an image is partly captured, partly calculated. Which means: there is a (small) loss of accuracy.

The second flaw is the OLPF or AA-filter. This is a filter right in front of the sensor that will ‘blur’ the image a little bit, making it less sharp. The reason is to prevent moiré, which is caused by the interference of patterns, in this case, a pattern in the subject (e.g. a fabric like silk) and the pixel layout. Moiré is something you can see on TV when somebody wears a shirt with stripes: you will see a weird structure, weird colors.

With the strongly increased resolution, the need for an AA-filter has become a bit less relevant, making it a design choice. Multiple camera manufacturers now offer camera models without AA-filter, e.g. Nikon D850, Sony A7R (I, II, III), EOS 5DS R.

Old technology becomes hot again

Pixel shifting multi-shooting is not a new technology: the Leaf Cantare XY, launched in 2000 if I’m not mistaken, already had a three-shot pixel shifting mode. What is new, is that this technology is now integrated into mainstream cameras, as a side effect of in body image stabilization. And that’s interesting: if you can move your sensor a little bit to compensate for movement of the body, why not use that to move it exactly one pixel to create better images? And that’s an interesting approach to innovation: using existing technology in new ways.

The way pixel shifting multi-shots are implemented, can be different. Olympus (the first to have it in a mainstream camera: the OM-5 II), takes eight pictures and shifts half a pixel. Pentax (K-3 II) and Sony (A7R III) use four shots and a one-pixel movement.

Does it deliver?

I did a few tests with the Sony A7R III. The workflow was always the following: I took pictures with identical settings, the camera mounted on a tripod, first the ‘regular’ shot, then the ‘pixel shifting’ shot. Images were exported to a JPEG, with maximum quality, using the Sony Imaging Edge software and default settings. It’s also that tool that will combine the four shots into one. Then I compared the images in Adobe Photoshop on a 4K calibrated monitor.

At this moment the Sony Imaging Edge software is the only tool to combine the four images. I guess we will see other companies implementing this in the (near) future in their RAW conversion tools.

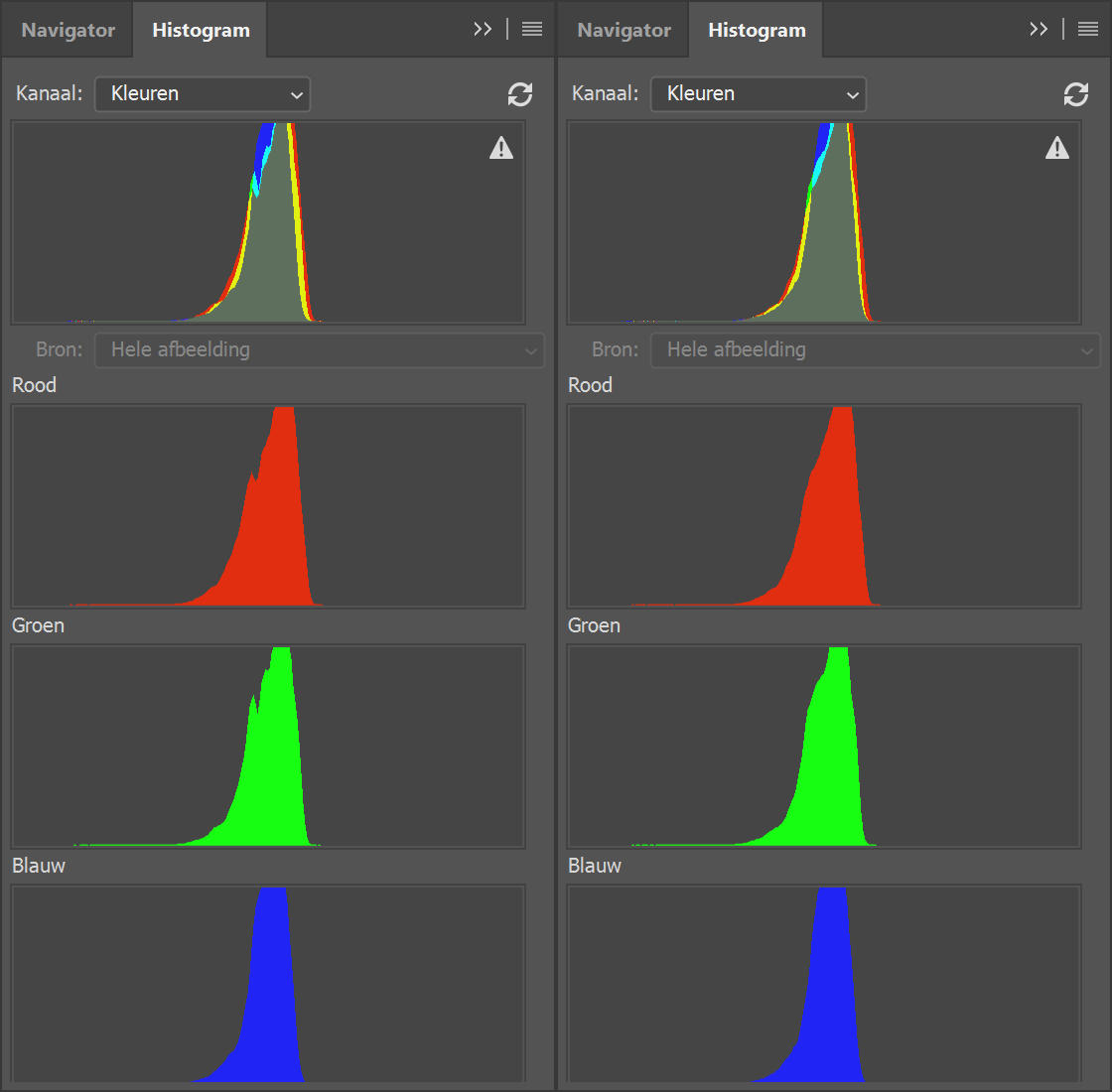

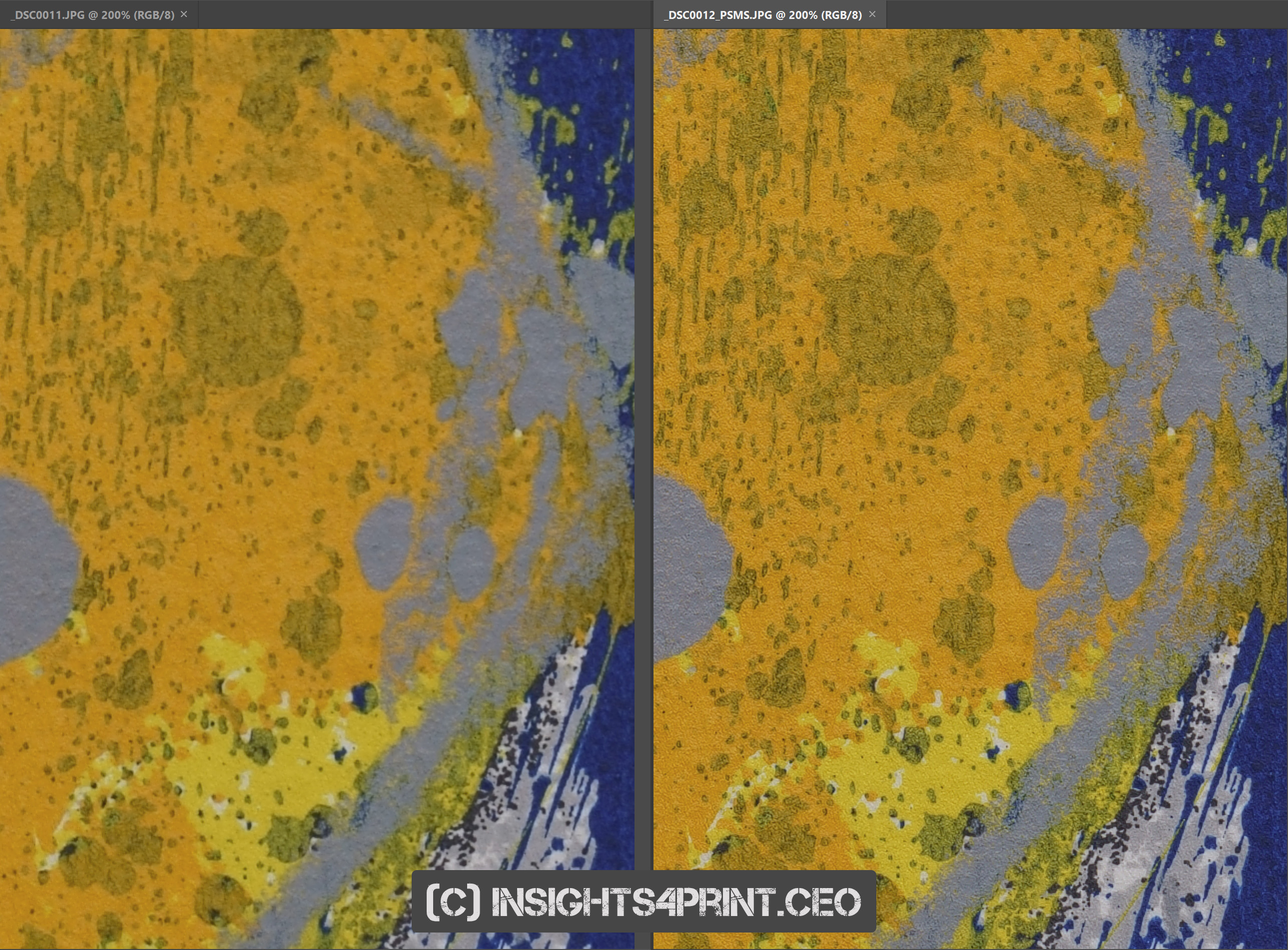

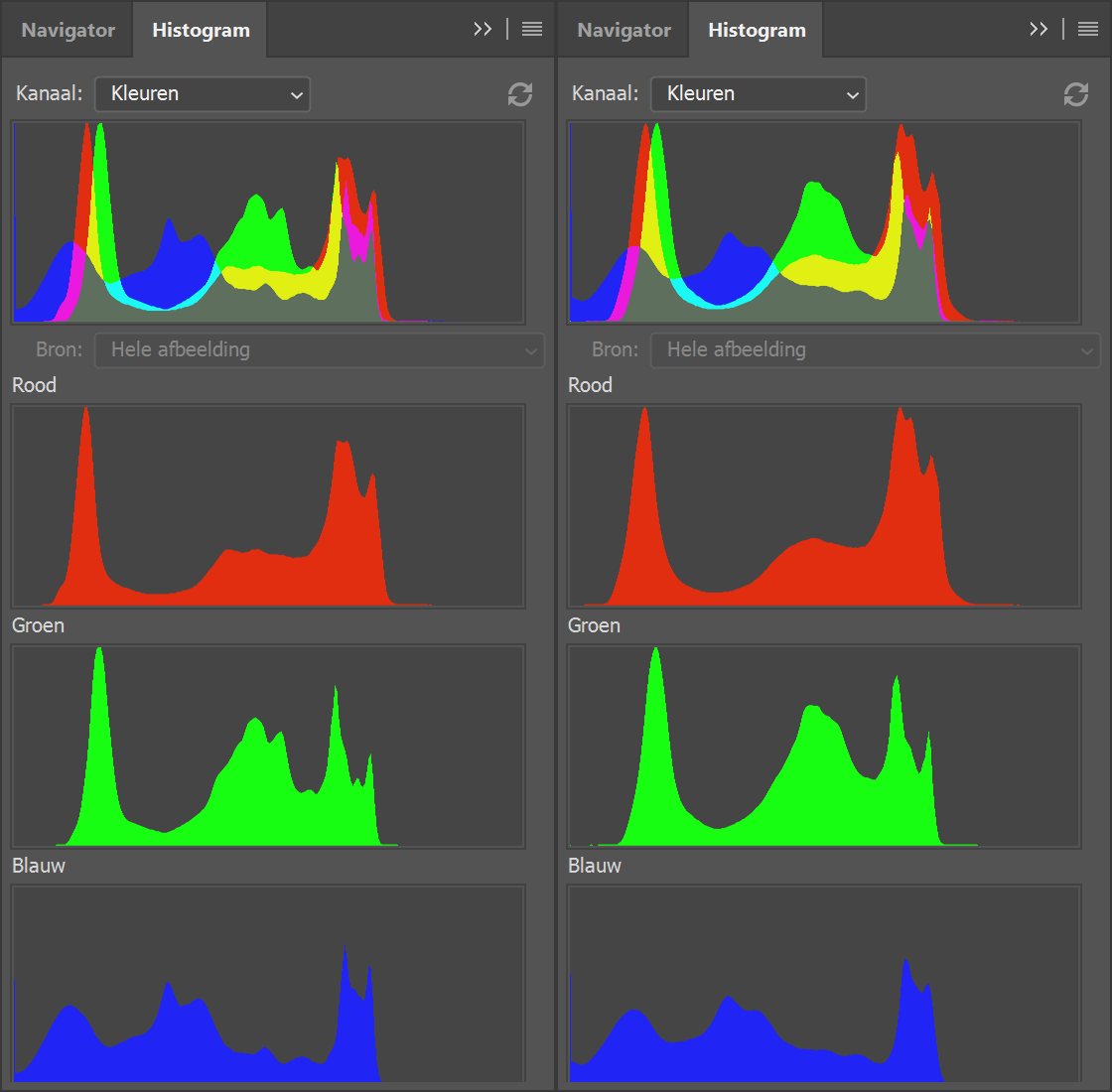

The first shot was a piece of marble. When compared at 100% (the first picture below, you can click on all the following pictures to view them in full size) you can notice that there is more information and better information in the PSMS (pixel shifting multi-shot) image at the right. When going to 200% (the second picture below), the difference becomes very clear. The histogram of the regular shot shows a dent in some curves, while the PSMS doesn’t. This shows the effect of the demosaicing procedure.

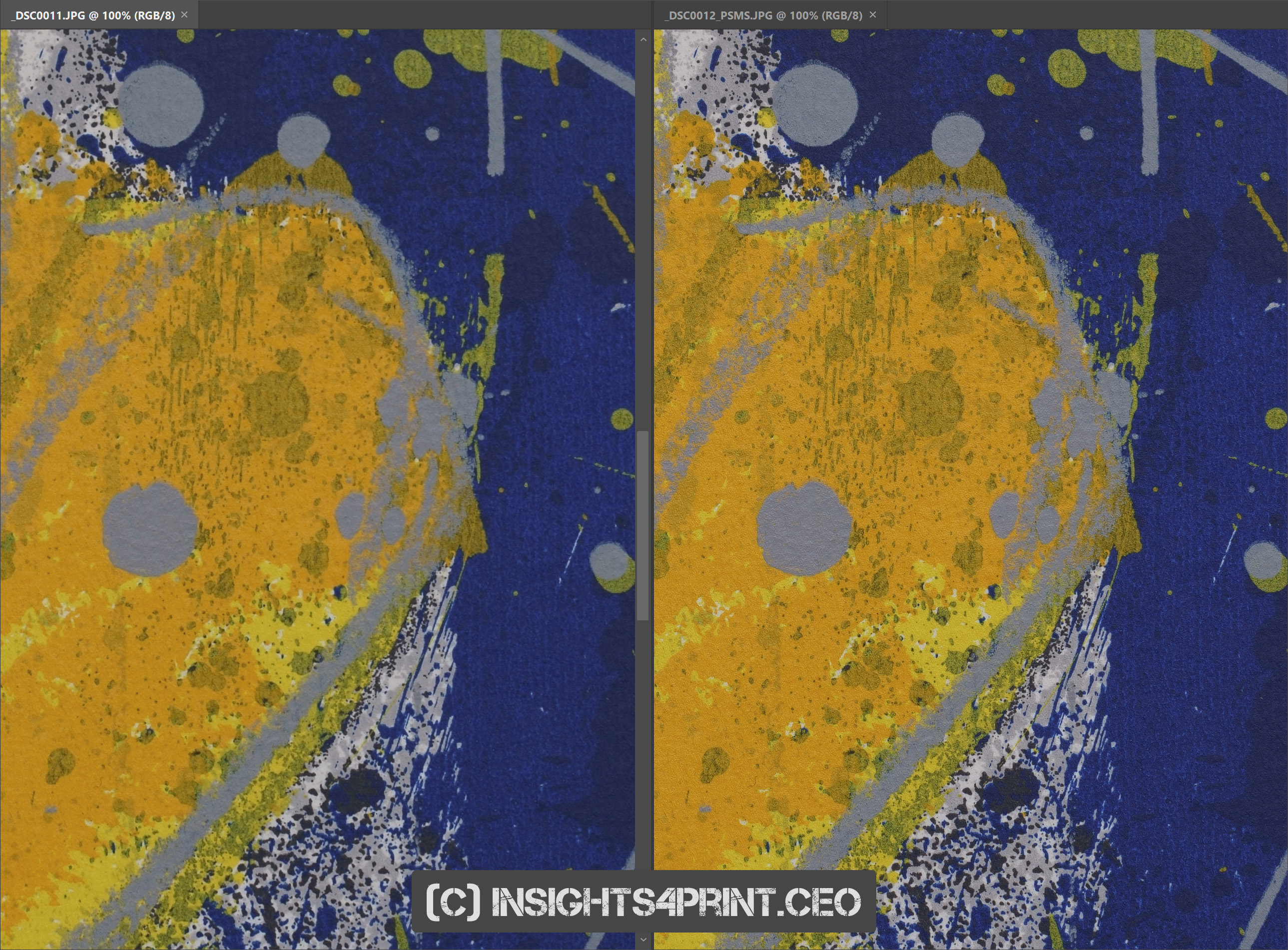

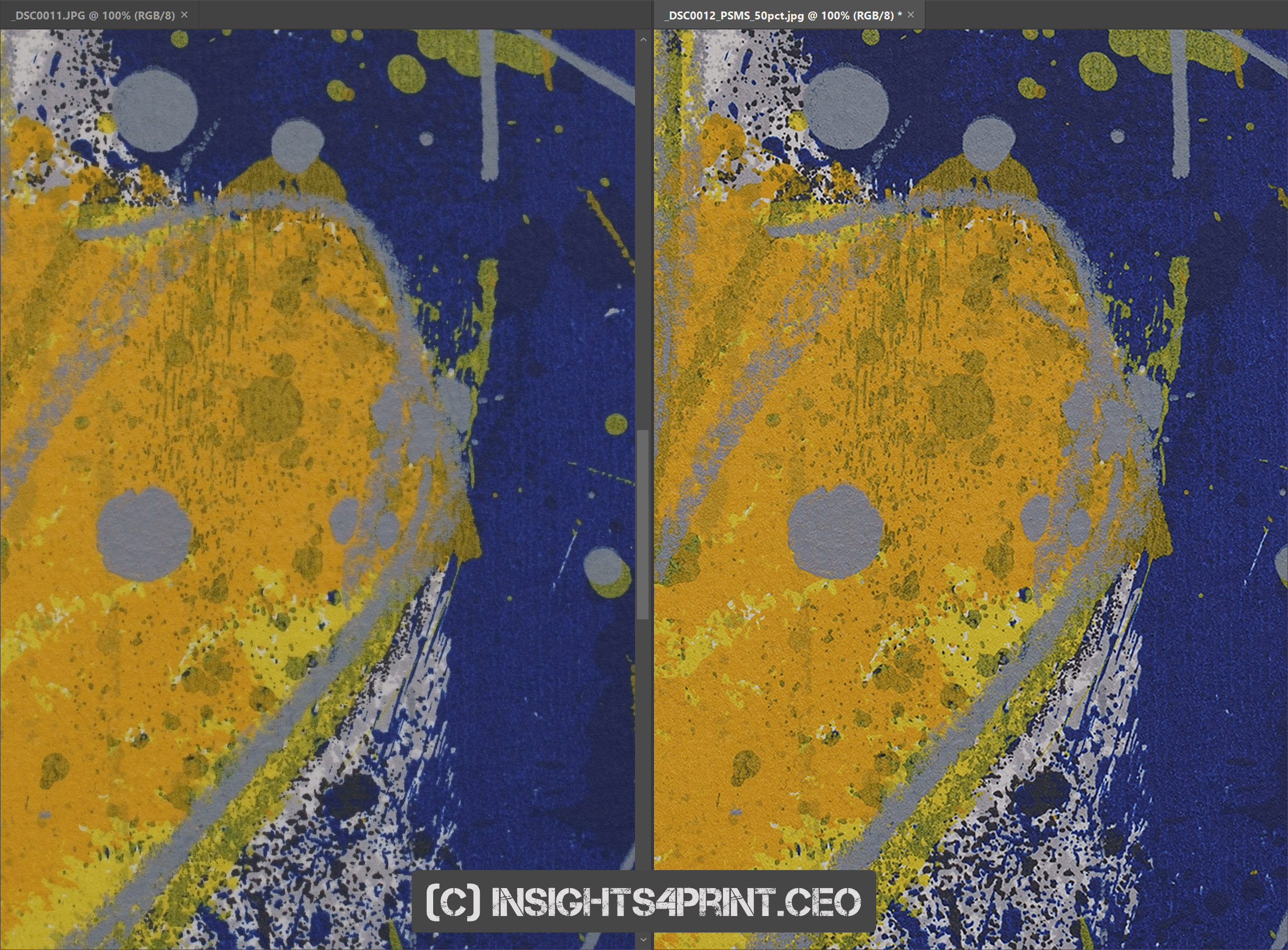

The second shot is a piece of art, a screen print, with very vibrant yellow and blue colors. Here, once again the 100% comparison already shows differences, when going to 200% it becomes very clear. This picture even has a ‘3D like’ structure to it. The histogram shows that the colors are more vibrant in the PSMS, which can be a plus for people making reproductions of artwork.

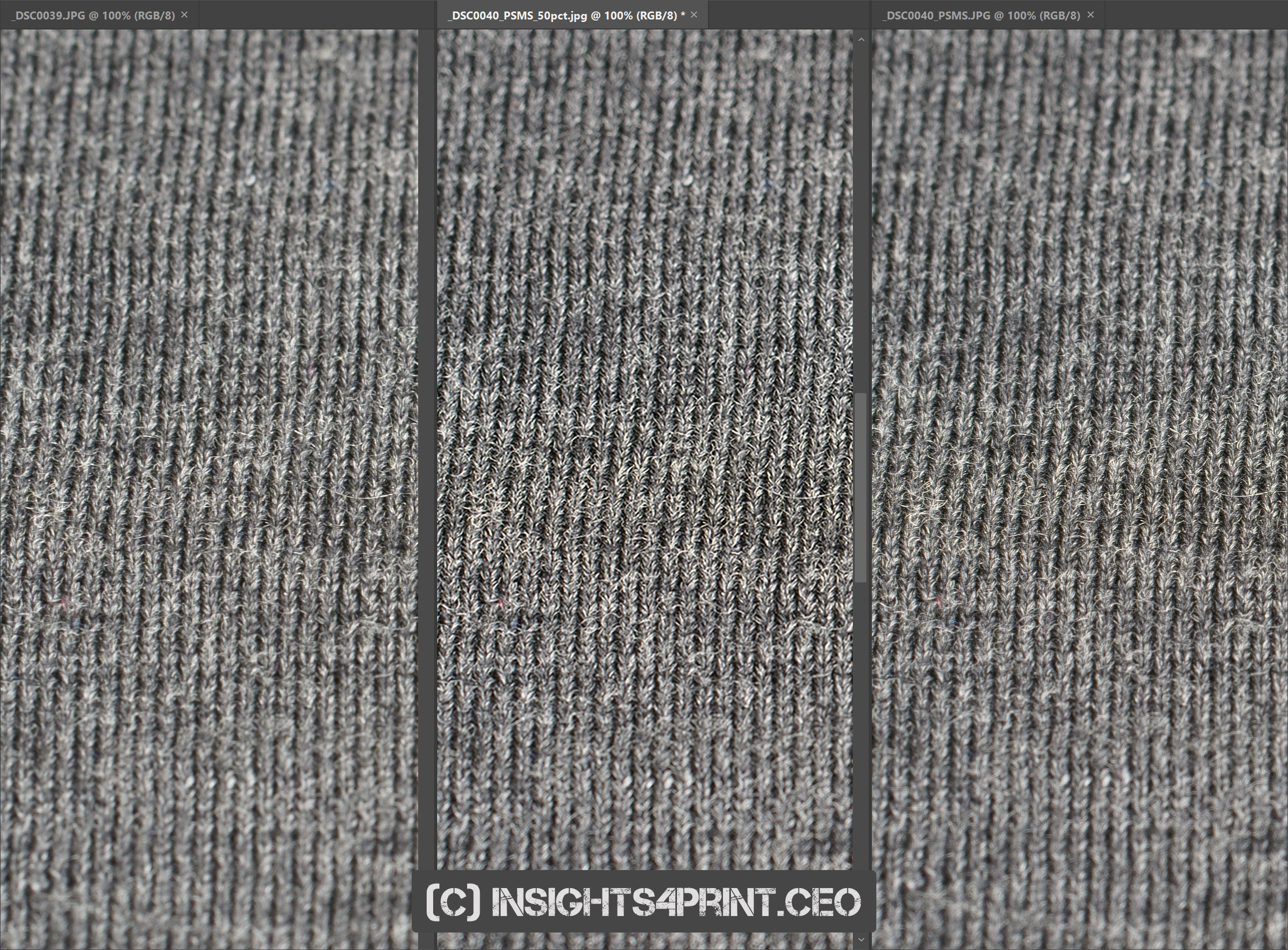

The third shot is of fabric: wool. Also here the story is the same: there is more, better detail (image below at 200%).

How much more information?

Now the big question is of course how much more information does the PSMS capture? To get an idea, I did the following: I downsampled the three PSMS images to a certain degree and then upsampled them again to the original size, using Adobe Photoshop and the default settings.

When downsampling the art image to 25% of the original size (3976 x 2652 pixels) and then upsampling it again to the original size (7952 x 5304 pixels), it seems to me that it still has more detail than the regular image. That’s amazing!

With the other images, it depends: the marble seems a bit better (especially the color quality is still better). The fabric looks a bit too contrasty, but the detail seems at least at the same level as the regular image.

So how should we interpret this? You could say that – at least in some situations – you get four times as much information, so comparable to a 168-megapixel Bayer-sensor. But you could also say that now that we’ve ditched the AA-filter and circumvented the mosaicing, we are – finally – getting the full resolution of the sensor, in the case of the A7R III: 42 megapixels…

Should you use this?

With this knowledge that the PSMS does deliver superior quality, should you order a Sony A7R III (or another one capable of doing this trick)? If you are into product photography or reproduction of artwork, yes. It will definitely be an improvement. And even if you are not in the Sony-system, there are adapters available for all other mounts. Some even with excellent AF (e.g. for Canon). And remember: this kind of quality hasn’t been available at this price point yet. This used to be the realm of medium format cameras.

What I should also mention is that the use of calibration tools like the X-Rite ColorChecker is highly recommended in that kind of workflow, especially for art reproductions and probably also fashion. You want to get everything right, especially the color.

Some people will certainly like to use it for landscape photography. It might be nice, but there is a downside: since you are taking four pictures and – in the Sony implementation – there is a delay of at least 1 second between the pictures, you will get weird effects with moving objects… I did one test with a landscape, fortunately for me, there was no wind, no movement at that time, so it did work. But I’ve also seen pictures that look very bizarre, due to moving objects.

Why is this important?

With this technology, the quality of studio shots, of art reproductions can make a jump forward. And in those types of photography, that increase in quality can be important. Especially when looking at the reproduction of artwork, I’ve seen a number of new approaches to get more faithful reproductions, the one even more complex than the other. With this pixel shifting technology, we can already improve on the image capturing part, with mainstream cameras. And then comes the next part: the faithful reproduction in print. Which might be another challenge.

From an innovation point of view, this PSMS is also interesting: it shows how an existing technology (in body image stabilization) can be used for a completely different goal. And that’s an important challenge for innovation teams: finding new applications for existing technologies.

PS

For the record: I’m not paid for this review, but I have been a Sony/Minolta user for ages (since 1983).

There are some alternatives to the Bayer-filter. The best known is the Fuji X-trans, which has a different layout: 4 green pixels are grouped into a big square. But also other kinds of pixels are possible:

- RGBW, with one pixel being white (transparent)

- RGBE, with an emerald pixel

- CYGM, with cyan, yellow, green and magenta.

An even more exotic design is Foveon, which has a similar design to the old films: different layers have different sensitivities. Although Foveon was already launched in 2002, the success is very limited. But next to Foveon (which is now a part of Sigma) also others have patented this kind of sensors.

And then there is also the field of ‘multi-spectral imaging’, which is even more powerful, but also more complicated. The research and demo projects I’ve seen for this, were in the field of art reproduction. And it’s not only limited to color capturing, but also color reproduction is more complex than the regular CMYK printing.

Be the first to comment